I guess it’s time to start posting here again.

Category: Technology

2/22

I’m working on the art of throwing one’s opponents off balance by agreeing with them. I hope to get a black belt in Okidokan.

It was a week for reporting on your vaccination status, thinking about life on Mars, and checking on friends in Texas. This week’s post includes tech flashbacks, a book report, new research on the origin of life, some light verse on brain physiology, a bit of literary slander, and Five Links.

Technology

Glory Days

“This was the 1983 January Carmel MAC Offsite — the one where we got the ‘90 Hours a Week and Loving It’ sweatshirts. And at the beginning of the second day, Steve started off the presentations from the teams with three things for us to remember. One was ‘real artists ship.’ The second was, ‘It’s more fun to be a pirate than to join the Navy.’ The third one was ‘Mac in a book by 1985,’ which was his long-term vision. Long-term then was two years.”

— Chris Espinosa, personal communication.

Also this, thanks to Scott Mace:

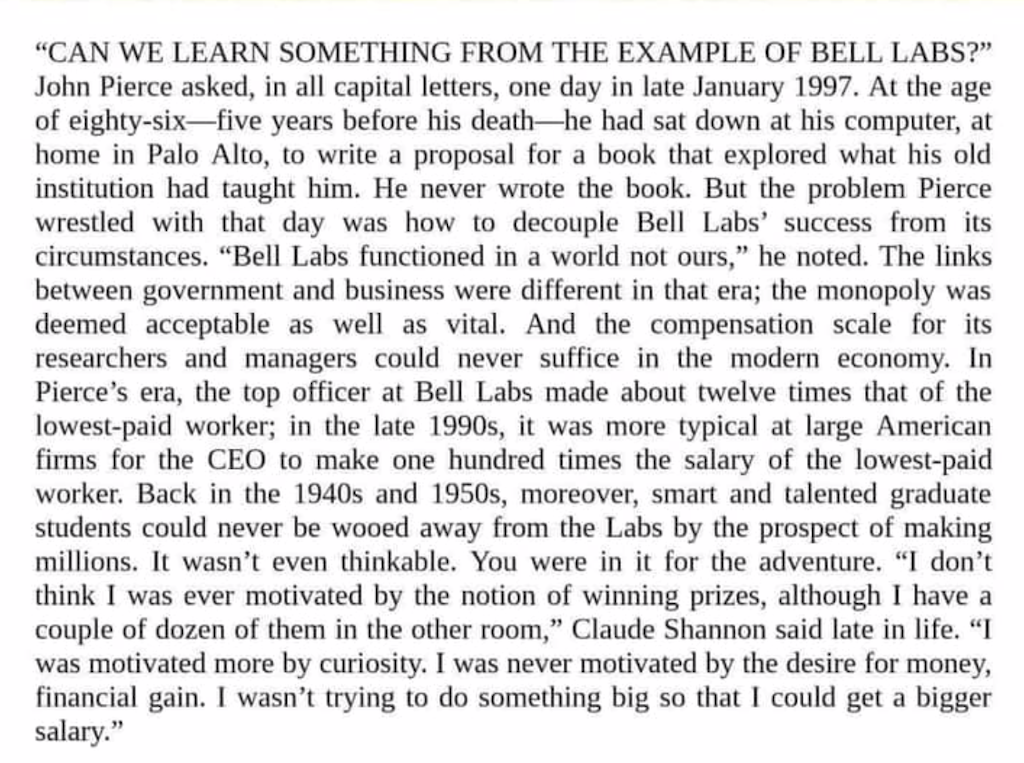

I don’t know. I’ll ask again. Can we learn something from the example of Bell Labs?

Did Darwinian Evolution Precede Life?

While Perseverance collects cigar-tube samples that could show that life once existed on Mars, a puzzle of evolution lurks behind the search. Cellular life evolved over billions of years on Earth, but the development of cellular life itself apparently happened, on a geological scale, almost as soon as it was possible. Some folks think this calls for an explanation. One possibility that the samples from Perseverance could shed light on is that life on Earth actually came here from Mars. We could actually be Martians.

Another, less science fiction-y explanation for the rapid development of Terrestrial life is suggested in this Phys.org article.

This part, not central to the article, caught my attention:

“Braun’s research group explored how spatial order could have developed in narrow, water-filled chambers within porous volcanic rocks on the sea bottom. These studies showed that, in the presence of temperature differences and a convective phenomenon known as the Soret effect, RNA strands could locally be accumulated by several orders of magnitude in a length-dependent manner.”

I may be misreading it, but to me this vividly illustrates the idea that environment not only drives evolution but can constrain it to narrow channels. Here the narrow channels are literal: those “narrow, water-filled chambers” in which long chains of molecules form.

But aside from my possible misreading, the article tackles a question that I think is endlessly varied and endlessly fascinating: How did this complexity emerge? Worth a read.

Tip for photographers wanting sharper images, based on NASA photography: less air.

Writing

Book Report

Two of the books that I am editing are scheduled to go to Production the first week in March: Kotlin and Android Development featuring Jetpack by Michael Fazio and Web Development with Clojure, Third Edition by Dmitri Sotnikov and Scot Brown. They’re both available now in beta versions and will be published soon by The Pragmatic Bookshelf.

“Two of the things I’ve wanted to do most in my life were to write a book and create a baseball simulator, and I was able to do both at once. I included Android Baseball League because it’s an excellent advanced app, but man, was it ever fun to put together.” — Michael Fazio

“A good software project is like a bonsai. You have to meticulously craft it to take the shape you want, and the tool you use should make it a pleasant experience. We hope to convince you that Clojure is that tool.” — Dmitri Sotnikov and Scot Brown

What Are Words For?

“The vitality of language lies in its ability to limn the actual, imagined and possible lives of its speakers, readers, writers. Although its poise is sometimes in displacing experience it is not a substitute for it. It arcs toward the place where meaning may lie. When a President of the United States thought about the graveyard his country had become, and said, ‘The world will little note nor long remember what we say here. But it will never forget what they did here,’ his simple words are exhilarating in their life-sustaining properties because they refused to encapsulate the reality of 600, 000 dead men in a cataclysmic race war. Refusing to monumentalize, disdaining the ‘final word,’ the precise ‘summing up,’ acknowledging their ‘poor power to add or detract,’ his words signal deference to the uncapturability of the life it mourns. It is the deference that moves her, that recognition that language can never live up to life once and for all. Nor should it. Language can never ‘pin down’ slavery, genocide, war. Nor should it yearn for the arrogance to be able to do so. Its force, its felicity is in its reach toward the ineffable.”

— Toni Morrison

Gatsby

I know I’m missing the irony. I know that none of this criticism is fair. But I have to get it off my chest anyway.

Nick Carraway’s distinctive voice is the result of Fitzgerald’s earnest attempt to have his narrator sound like a stockbroker who fancies himself a writer. It succeeds.

Nobody ever arrived at the death of Jay Gatsby and thought, “that ended well.” Nobody but Nick Carraway.

When you make the climax of your novel, the death of your central character, a random, meaningless chance event, you are making a point. Whatever that point is, it’s not about boats beating against the past.

The green light. The valley of ashes. The giant eyes looking down on everyone. This is writing with a crayon.

Sorry/not sorry.

Now Where Did I Put That Thought?

Sometimes I write light verse.

Cerebrum, cerebellum, Medulla oblongata: Somewhere in there I stashed away What things I shoulda thoughta, While areas of Broca, Wernicke, and Brodmann Allow me to articulate What thoughts I’ve not forgotten. The true, the just, the good: I struggle to explain ’em; Harder yet to say how could The cranium contain ’em.

Five Links

Watch NASA’s Mars Perseverance Rover Safely Land on Mars

Are You on the FBI’s New Insurrection-Specific Most Wanted List?

Why the Texas Blackout Happened

Did you know that France has 363 AOCs to America’s 1? You can look it up.

Reboot

I’ve removed old content in preparation for rebooting this blog. We’ll see if that actually happens.